UX Design for AI Interfaces

How trust, transparency, and control are designed in AI-powered applications — and which UX patterns help achieve this.

AI-powered interfaces present fundamentally new challenges for UX design. When systems autonomously make decisions, generate content, or provide recommendations, the user's role changes. Deterministic tools give way to probabilistic systems whose behavior is not always predictable. The central question is: How do we design interfaces that people trust, that they can use with confidence — and that honestly communicate the actual capabilities and limitations of the technology?

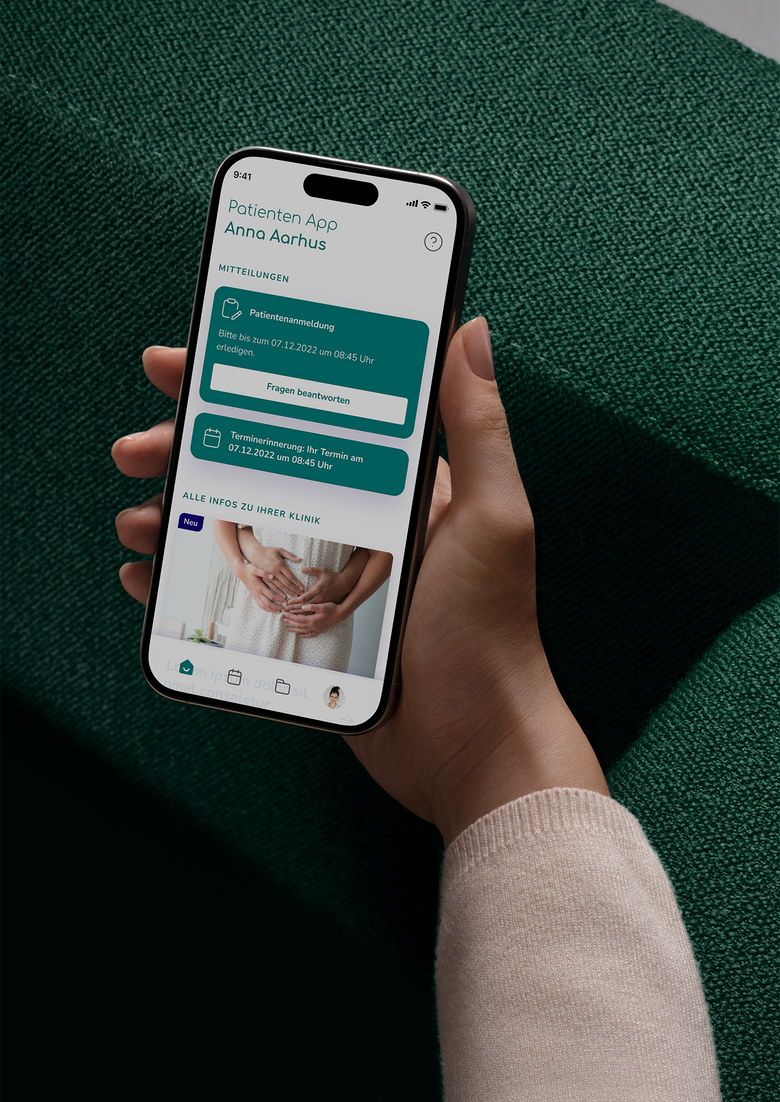

I have been deeply engaged with this very question for several years. In my work for Fobizz — an EduTech platform for teachers that offers numerous AI features — I experienced firsthand how crucial interface design is for the adoption of AI tools in education. Teachers working with generative AI for the first time need different onboarding patterns and trust signals than tech-savvy early adopters. In my collaboration with MEQO, a software provider in the MedTech sector, reliability and traceability were paramount — because when AI-powered interfaces deliver recommendations in healthcare, errors have real consequences for people.

From these and other projects, I have developed a systematic design approach that encompasses five dimensions: expectation management, transparency, control, error tolerance, and the design of human-AI collaboration.

Expectation Management: Establishing the Right Mental Model

The greatest UX challenge with AI interfaces lies not in the technology itself, but in the gap between user expectations and actual system behavior. Research by the Nielsen Norman Group shows that users form a lasting mental model of the AI within the first two to three interactions. This model determines whether they trust the system, use it effectively, or abandon it after the first disappointment.

Users draw on familiar archetypes: some see AI as an all-knowing oracle, others as an obedient tool, and still others as an inexperienced intern who needs supervision. Each of these mental models has consequences for usage behavior. Those who consider AI infallible do not verify results — with potentially serious consequences. Those who consider it unreliable do not use it at all.

Effective expectation management therefore begins during onboarding: What tasks can the system reliably handle? Where are its known weaknesses? Concrete examples — both good and poor results — help users develop a realistic picture. Rather than abstract performance promises, formulations such as "This model correctly identifies X in Y% of cases" are far more effective for building trust.

Transparency and Explainability: From Black Box to Understanding

Users need to understand when and why an AI is active. This means: results should be traceable, data sources disclosed, and automated decisions clearly labeled as such. Transparency builds trust and forms the foundation for informed use. However, the right degree of transparency depends on context.

Research describes a spectrum of explainability that ranges from simple labeling to interactive exploration. In low-risk scenarios, a simple label suffices: "AI-generated" or "Automatically suggested." In medium-risk scenarios — such as e-commerce or project management — users expect a rationale: "This suggestion is based on your last three orders." In high-risk scenarios, such as medicine or finance, traceable chains of reasoning with source references and the ability to examine the decision logic step by step are required.

A common mistake in this regard is so-called "Explanation Washing" — explanations that sound plausible but do not actually help the user make a better decision. Effective explanations are actionable: they enable users to assess the quality of the AI output and to course-correct when necessary.

Control and Correctability: Keeping Humans in the Decision-Making Role

The central design principle for AI interfaces can be summarized in a single sentence: users must be able to remain in control at all times. This sounds self-evident, but is frequently violated in practice — for example, when AI suggestions are automatically applied, rejection options are hidden, or there is no way to return to the state before the AI intervention.

The automation spectrum by Sheridan and Verplank provides a useful framework: from pure notification (the AI draws attention to something) to suggestions (the AI recommends, the user decides) to supervised automation (the AI acts, the user retains oversight). The appropriate level depends on the cost of errors, reversibility, and the user's expertise. The general rule: when in doubt, choose less automation.

Well-designed AI interfaces therefore offer a consistent set of control mechanisms: one-click undo for immediate reversal, edit-in-place for correcting individual elements, regenerate functions for a fresh attempt with modified context, and global settings that allow users to control the AI's behavior system-wide. Crucially, these functions must not only exist but be visible and easily accessible — not buried in submenus.

In the medical context especially, the "Human in the Loop" principle becomes a non-negotiable design standard. Here, an AI must never make autonomous final decisions — every diagnostic suggestion, every treatment recommendation, every risk assessment must be reviewed and approved by a qualified professional before it affects patients. In my work with MEQO, I experienced how central this principle is to interface design: AI-generated results are deliberately presented as suggestions, never as directives. The interface must not merely allow human validation but actively require it — through mandatory confirmation steps, clear labeling of AI output, and an architecture that keeps the physician in the decision-making role. In regulated fields like healthcare, "Human in the Loop" is not an optional feature but an ethical and legal necessity.

Error Tolerance: Designing for AI Failure

AI systems make mistakes. Not as an exception, but as a matter of course. Hallucinations, misinterpretations, biases, and outdated information are not bugs that will eventually be fixed, but inherent properties of probabilistic models. The UX implication: error handling must not be treated as an edge case, but must be a central component of interaction design.

A proven pattern is the so-called fallback cascade: when full AI functionality fails, a simplified version is offered. If that also fails, the user receives a template or a structured alternative. As a final step, the case is handed off to a human representative. The user must never end up in a dead end.

Equally important is the communication of uncertainty. Rather than suggesting false certainty, AI interfaces should use confidence indicators: visual gradations, linguistic hedging, or explicit percentage values. Below a certain confidence level, the system should automatically switch from assertion mode to suggestion mode: "I'm not sure, but you might try X."

Human-AI Collaboration: From Tool to Working Partner

The most mature form of AI interaction is genuine collaboration — an iterative process in which humans and machines work together toward a result. Google's PAIR framework and Microsoft's HAX Toolkit have defined established patterns for this, which can be categorized into three archetypes.

In the augmentation model, the human leads while the AI supports. The AI provides information, drafts, or suggestions that the human can accept, reject, or refine. Typical applications include writing assistants, code completion, and research tools. In the automation model, the AI leads while the human supervises. The AI handles repetitive tasks independently, and the human intervenes when needed. This is typical for email filters, anomaly detection, or content moderation. In the partner model, both work as equals. Human and AI refine a shared artifact in iterative cycles — with clear turn-taking, version history, and attribution of contributions.

Regardless of the model, one principle holds: the handoff between human and AI must be explicitly designed. Users must always know whether the AI is currently working, waiting for input, or presenting a result for review. Processing indicators such as "Analyzing documents ..." or "Generating image ..." reduce uncertainty and give users the sense that they understand the process.

Agentic UX: The Next Evolution

With the increasing prevalence of AI agents — systems that autonomously plan and execute multi-step tasks — new design challenges arise that go beyond traditional assistant systems. Users need to understand and approve not just the result, but also the AI's plan.

The key UX principles for agent-based systems include: plan transparency (show users what the agent intends to do before it acts), step-by-step approval (allow sign-off at each step, not just at the end), rollback capability (undo individual steps without losing the entire workflow), and delegation controls (let users decide per task type how much autonomy they grant).

Designing AI interfaces is therefore not a one-time design task, but a continuous process. Trust builds slowly and erodes quickly. A single erroneous result can destroy the trust that many correct results have built. UX designers for AI systems must therefore design the entire trust lifecycle — from initial trust formation through ongoing maintenance to active repair after failures.